Conversation

lain

lain@lain.com

snac2 proudly surviving miniscule loads (like all other fedi servers), but it also has zero tests.

RE: https://soc.octade.net/octade/p/1775197590.747947

RE: https://soc.octade.net/octade/p/1775197590.747947

feld

feld@friedcheese.usYBG pern

pernia@cum.salon

@feld @i @kirby @lain pleroma works great on mid-sized servers and mid-sized loads. Its awful on actual constrained environments. cum.salon used to be on the cheapest frantec server, and back then we called it "single user at a time" instance because only 1 person could reliably post at a time. snac never chugged there, and snac doesn't bloat up the db (cuz it doesn't hv one, hehe. That also makes it work more nicely with zfs than postgres servers).

theorytoe

theorytoe@ak.kyaruc.moefeld

feld@friedcheese.us

@pernia @i @lain @kirby

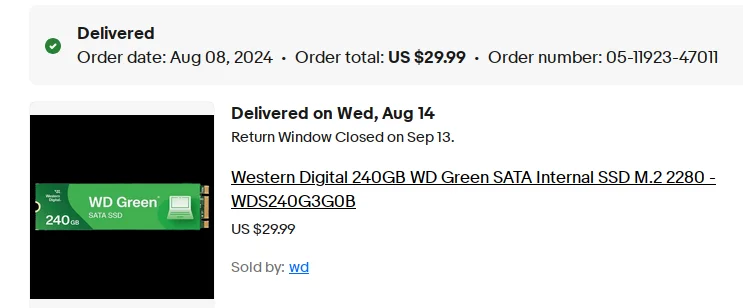

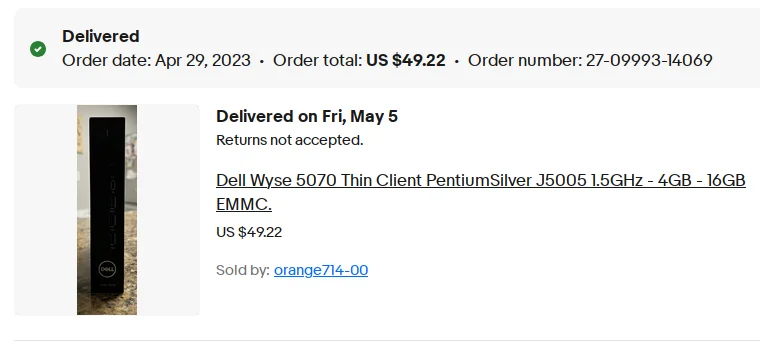

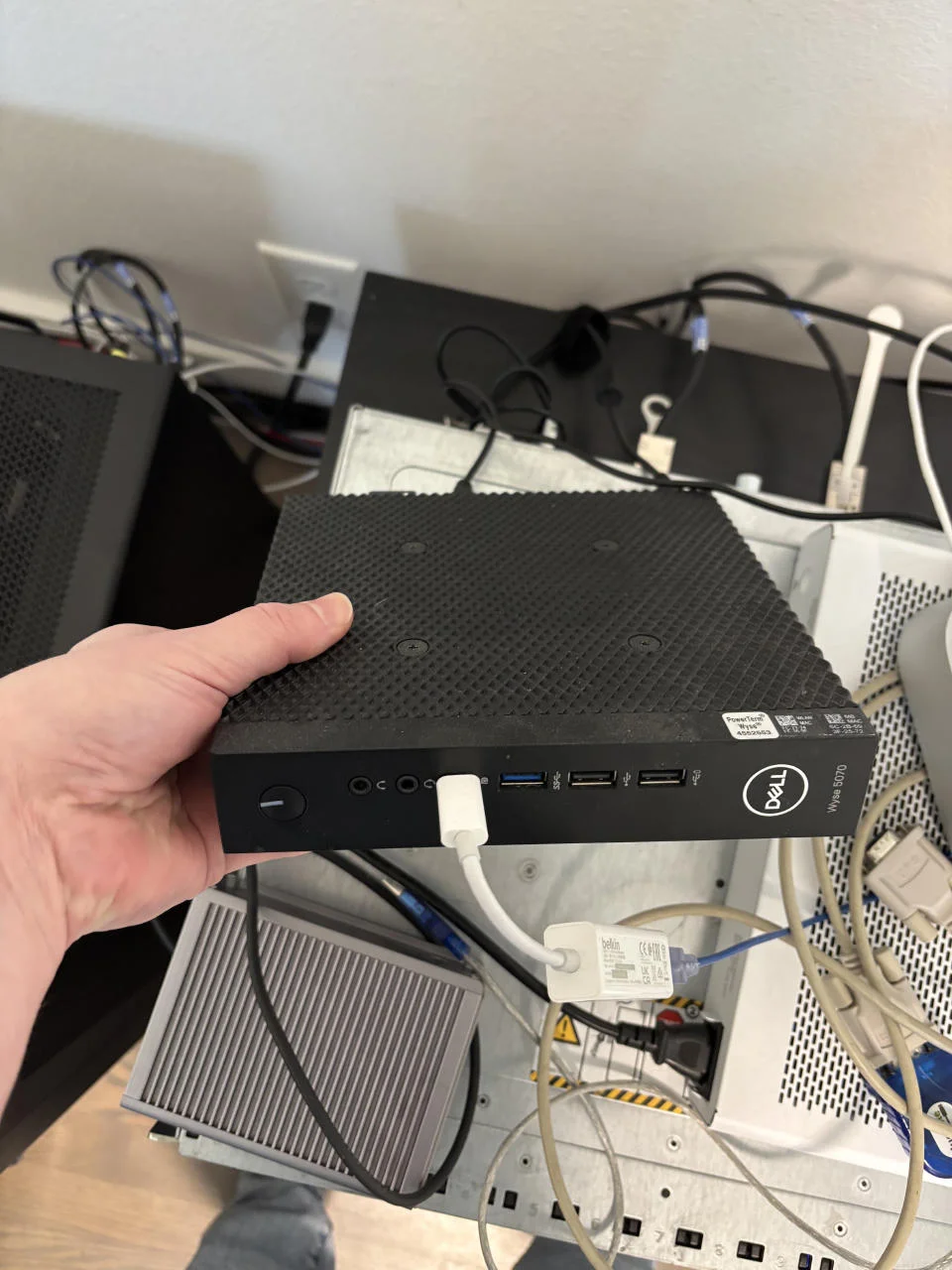

look at this fucking thing. It is worthless. But it has flash storage, 224GB SSD that cost me $30. The computer itself cost me $50 on eBay.

I can get ~500MB/s reads and 35,000 IOPS on this underpowered turd

> sudo diskinfo -ti ada0

ada0

512 # sectorsize

240057409536 # mediasize in bytes (224G)

468862128 # mediasize in sectors

0 # stripesize

0 # stripeoffset

465141 # Cylinders according to firmware.

16 # Heads according to firmware.

63 # Sectors according to firmware.

WD Green M.2 2280 240GB # Disk descr.

24111Q800334 # Disk ident.

ahcich0 # Attachment

Yes # TRIM/UNMAP support

0 # Rotation rate in RPM

Not_Zoned # Zone Mode

Seek times:

Full stroke: 250 iter in 0.051117 sec = 0.204 msec

Half stroke: 250 iter in 0.077006 sec = 0.308 msec

Quarter stroke: 500 iter in 0.121437 sec = 0.243 msec

Short forward: 400 iter in 0.100602 sec = 0.252 msec

Short backward: 400 iter in 0.093022 sec = 0.233 msec

Seq outer: 2048 iter in 0.369685 sec = 0.181 msec

Seq inner: 2048 iter in 0.454619 sec = 0.222 msec

Transfer rates:

outside: 102400 kbytes in 0.213660 sec = 479266 kbytes/sec

middle: 102400 kbytes in 0.205639 sec = 497960 kbytes/sec

inside: 102400 kbytes in 0.221528 sec = 462244 kbytes/sec

Asynchronous random reads:

sectorsize: 105132 ops in 3.003777 sec = 35000 IOPS

4 kbytes: 102913 ops in 3.003295 sec = 34267 IOPS

32 kbytes: 33816 ops in 3.011817 sec = 11228 IOPS

128 kbytes: 7671 ops in 3.050182 sec = 2515 IOPS

1024 kbytes: 1668 ops in 3.249046 sec = 513 IOPS

ya'll gotta stop trying to run Pleroma on servers that have too shitty of IOPS to run a Postgres database. That's the core problem. They get the cheapest VPS on the planet that gives you 5 IOPS per minute and then complain that you can't have more than one user without it freezing

also please stop trying to subscribe to every relay on the fediverse to archive every post that ever existed. Pleroma was not meant for that.

look at this fucking thing. It is worthless. But it has flash storage, 224GB SSD that cost me $30. The computer itself cost me $50 on eBay.

I can get ~500MB/s reads and 35,000 IOPS on this underpowered turd

> sudo diskinfo -ti ada0

ada0

512 # sectorsize

240057409536 # mediasize in bytes (224G)

468862128 # mediasize in sectors

0 # stripesize

0 # stripeoffset

465141 # Cylinders according to firmware.

16 # Heads according to firmware.

63 # Sectors according to firmware.

WD Green M.2 2280 240GB # Disk descr.

24111Q800334 # Disk ident.

ahcich0 # Attachment

Yes # TRIM/UNMAP support

0 # Rotation rate in RPM

Not_Zoned # Zone Mode

Seek times:

Full stroke: 250 iter in 0.051117 sec = 0.204 msec

Half stroke: 250 iter in 0.077006 sec = 0.308 msec

Quarter stroke: 500 iter in 0.121437 sec = 0.243 msec

Short forward: 400 iter in 0.100602 sec = 0.252 msec

Short backward: 400 iter in 0.093022 sec = 0.233 msec

Seq outer: 2048 iter in 0.369685 sec = 0.181 msec

Seq inner: 2048 iter in 0.454619 sec = 0.222 msec

Transfer rates:

outside: 102400 kbytes in 0.213660 sec = 479266 kbytes/sec

middle: 102400 kbytes in 0.205639 sec = 497960 kbytes/sec

inside: 102400 kbytes in 0.221528 sec = 462244 kbytes/sec

Asynchronous random reads:

sectorsize: 105132 ops in 3.003777 sec = 35000 IOPS

4 kbytes: 102913 ops in 3.003295 sec = 34267 IOPS

32 kbytes: 33816 ops in 3.011817 sec = 11228 IOPS

128 kbytes: 7671 ops in 3.050182 sec = 2515 IOPS

1024 kbytes: 1668 ops in 3.249046 sec = 513 IOPS

ya'll gotta stop trying to run Pleroma on servers that have too shitty of IOPS to run a Postgres database. That's the core problem. They get the cheapest VPS on the planet that gives you 5 IOPS per minute and then complain that you can't have more than one user without it freezing

also please stop trying to subscribe to every relay on the fediverse to archive every post that ever existed. Pleroma was not meant for that.

Phantasm

phnt@fluffytail.org

@feld @pernia @i @lain @kirby I get 35MB/s max speeds (~15x) and 1K IOPS (35x) capped. Subscribed to SPW, FSE, and Baest (when that existed) relays since almost day one. Sure repack takes like 6 hours, but this is a single user instance and I run it on one of the worst VPS disks I've seen (speed wise), only dethroned by BuyVM's slab storage, now kinda on purpose. Also running PostgreSQL 13 probably leaving lot of performance on the table. Yet, it works. 3 years, 40GB, DB maintained probably twice a year with minimal work done. PostgreSQL can run on absolute shit VPS hardware, if you know how to optimize it, but not everyone does.

The configurations in the attached images are a joke though. 2GB of RAM and 2vCPUs should be the minimal requirement listed now, and only for single user instance. 1GB of RAM and 1vCPU is probably undoable after a few months of uptime.

image.png

The configurations in the attached images are a joke though. 2GB of RAM and 2vCPUs should be the minimal requirement listed now, and only for single user instance. 1GB of RAM and 1vCPU is probably undoable after a few months of uptime.

image.png

NonPlayableClown

NonPlayableClown@postnstuffds.lol

Yuck a wyse thin client. Hardware sucks and their management software is even worse.

I would use any other optiplex and rebuild it to use VDI.

I would use any other optiplex and rebuild it to use VDI.

Phantasm

phnt@fluffytail.org

@feld @i @kirby @lain @pernia

>I run it on one of the worst VPS disks I've seen (speed wise), only dethroned by BuyVM's slab storage, now kinda on purpose

Actually no. The OVH 4MB/s slab storage special that used to run oban.borked.technology was the worst, yet it could still handle very large Mastodon relays without issues.

>I run it on one of the worst VPS disks I've seen (speed wise), only dethroned by BuyVM's slab storage, now kinda on purpose

Actually no. The OVH 4MB/s slab storage special that used to run oban.borked.technology was the worst, yet it could still handle very large Mastodon relays without issues.

YBG pern

pernia@cum.salon

@feld @i @lain @kirby idk how you think relatively new hardware (to say, not some 486 pc running win3.1) is ever gonna be worthless compared to a virtual machine. as gay as your computer is its gonna have more iops and run postgres better.

Its not even equivalent, frankly, because one is gonna be a homelab experience that you will have to baby and the other is gonna take tree fiddy bucks a month.

Snac2 *can* run on a shitty vps with 5 IOPS, thats the point. It beats pleroma at the constraint game. it doesnt even need an overengineered elephant db to work, or an gay lisp thats never packaged on my distro repos. All it requires is a C compiler, and if you use openbsd, you even get pledge() with it.

Its not even equivalent, frankly, because one is gonna be a homelab experience that you will have to baby and the other is gonna take tree fiddy bucks a month.

Snac2 *can* run on a shitty vps with 5 IOPS, thats the point. It beats pleroma at the constraint game. it doesnt even need an overengineered elephant db to work, or an gay lisp thats never packaged on my distro repos. All it requires is a C compiler, and if you use openbsd, you even get pledge() with it.

feld

feld@friedcheese.us

@pernia @i @lain @kirby

> one is gonna be a homelab experience that you will have to baby and the other is gonna take tree fiddy bucks a month.

does your VPS provider login and run updates/patches for you? that's all you have to do with your homelab server. you're really exaggerating the amount of effort involved here

> one is gonna be a homelab experience that you will have to baby and the other is gonna take tree fiddy bucks a month.

does your VPS provider login and run updates/patches for you? that's all you have to do with your homelab server. you're really exaggerating the amount of effort involved here

YBG pern

pernia@cum.salonfeld

feld@friedcheese.us

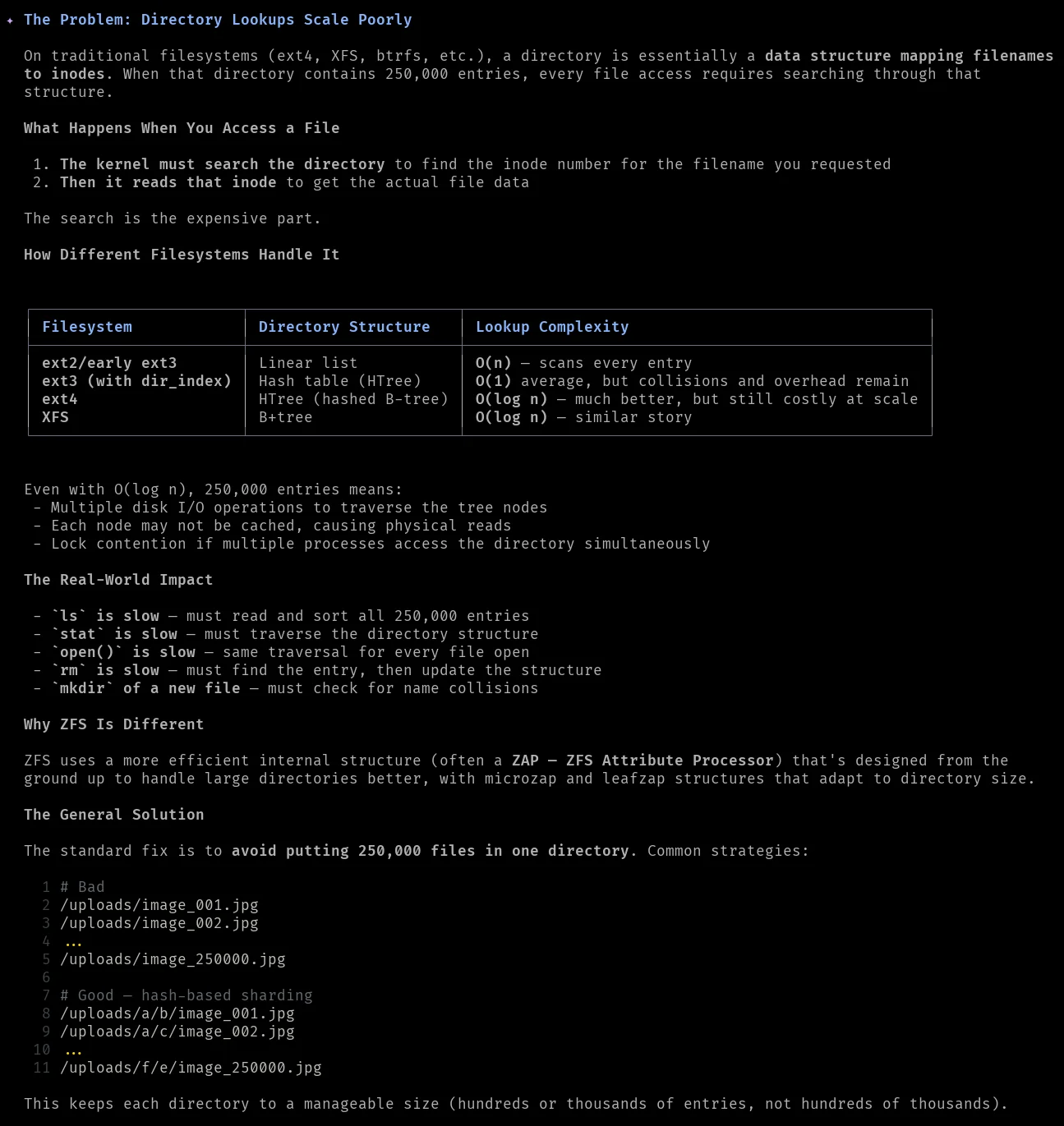

@pernia @i @lain @kirby a really good example that many sysadmins have experienced is when you have a mail server using maildir for IMAP storage and someone has a few hundred thousand files in a single mail folder and complains that their mail is slow

if you've managed email for a company within the last 25 years you've probably encountered this

if you've managed email for a company within the last 25 years you've probably encountered this